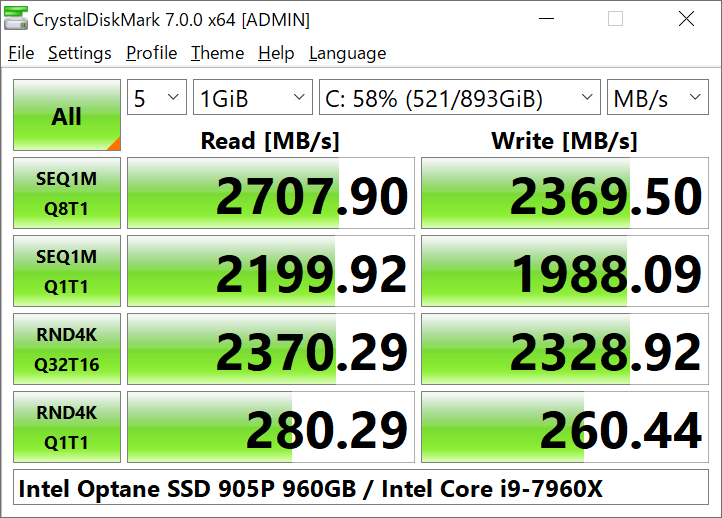

Having this level of performance (or better) makes your life so much easier as a database professional. One performance goal I like to shoot for on new database server builds is to have at least 1GB/sec of sequential throughput for every single drive letter or mount point. Sequential throughput is critical for many common database server activities, including full database backups and restores, index creation and rebuilds, and large data warehouse-type sequential read scans (when your data does not fit into the SQL Server buffer pool). It is also actually fairly common to see the actual magnetic disks in a direct attached storage (DAS) enclosure or a storage area network (SAN) device be so busy that they cannot deliver their full rated sequential throughput. Despite its everyday importance to SQL Server, sequential disk throughput often gets short-changed in enterprise storage, both by storage vendors and by storage administrators. For example, 556 MB/sec equals 135,759 IOPS times a 4096 bytes transfer size, while 135,759 IOPS times a 8192 bytes transfer size would be 1112 MB/sec of sequential throughput. Your sequential throughput metric in MB/sec equals the IOPS times the transfer size.

Sequential throughput is the rate that you can transfer data, typically measured in megabytes per second (MB/sec) or gigabytes per second (GB/sec). You can translate IOPS to MB/sec and MB/sec to latency as long as you know the queue depth and transfer size. IOPS actually equals queue depth divided by the latency, and IOPS by itself does not consider the transfer size for an individual disk transfer. One of the key advantages of flash storage is that it can read/write to multiple NAND channels in parallel, along with the fact that there are no electro-mechanical moving parts to slow disk access down. As more IOs are added to the queue, latency will increase. For example, a constant latency of 1ms means that a drive can process 1,000 IOs per second with a queue depth of 1. This metric is directly related to latency.

The second metric is Input/Output Operations per Second (IOPS).

Input/Output Operations per Second (IOPS) You also want to make sure your hardware disk cache is actually enabled, since some vendor disk management tools disable it by default. For SQL Server usage, you want to make sure you are using write-back caching rather than write-through caching if at all possible. Write-back caching is much faster than write-through caching, but it requires a battery backup for the disk controller. Reads are complete when the operating system receives the data, while writes are complete when the drive informs the operating system that it has received the data.įor writes, the data may still be in a DRAM cache on the drive or disk controller, depending on your caching policy and hardware. The measurement starts when the operating system sends a request to the drive (or the disk controller) and ends when the drive finishes processing the request. This is often called response time or service time. The first metric is latency, which is simply the time that it takes an I/O to complete. There are actually three main metrics that are most important when it comes to measuring I/O subsystem performance: Latency There are a number of reasons for poor storage performance, but measuring it and understanding what needs to be measured and monitored is always a useful exercise. One of the most common performance bottlenecks that I see as a consultant is inadequate storage subsystem performance.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed